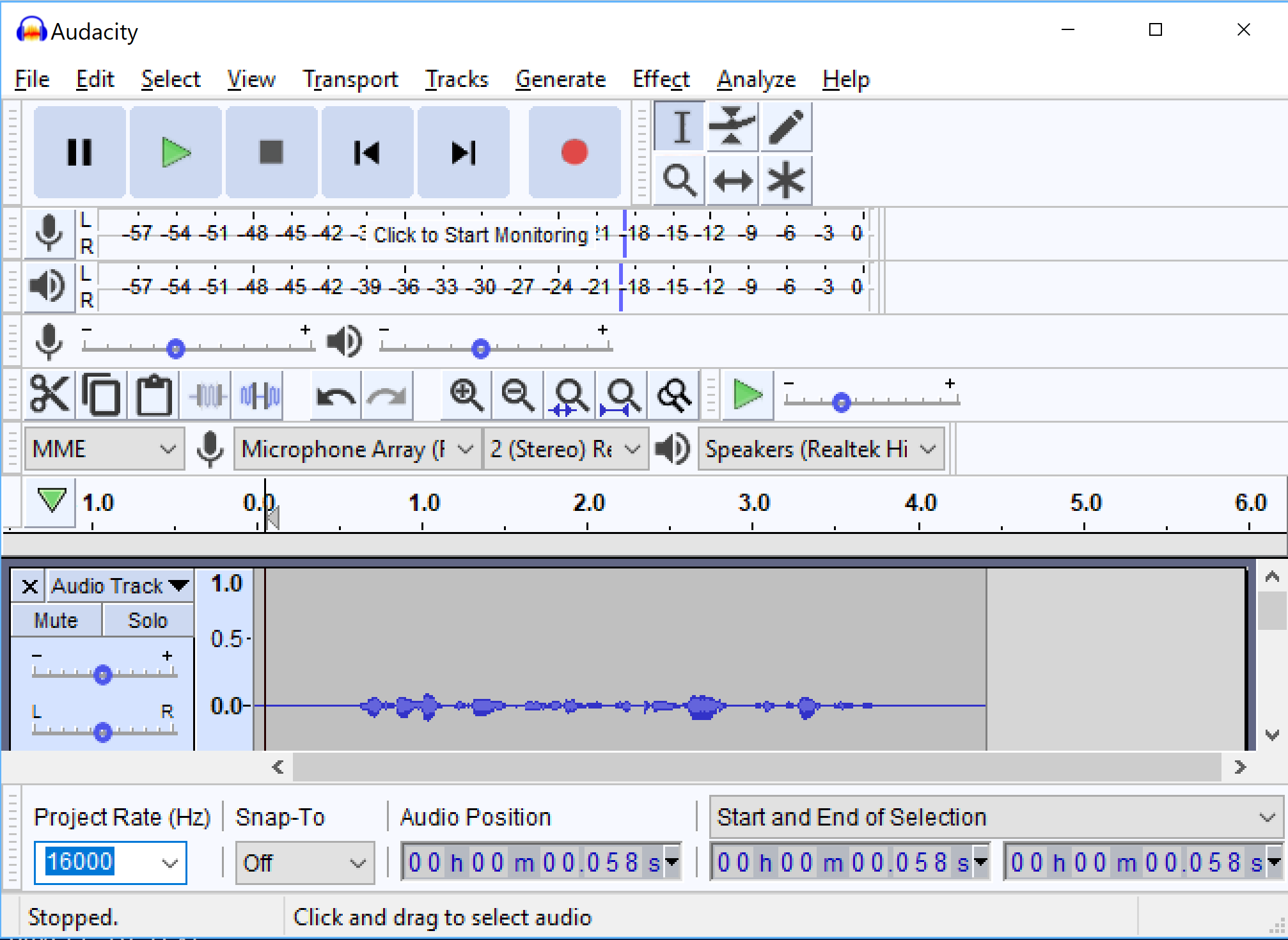

That feels counterintuitive to language professionals and the shift has not been easy.Įach week, the volunteers ‘meet’ to discuss issues they come across during their work. For example, they have had to learn how to ignore punctuation, capital letters, and hyphens as the transcription at this stage comes out ‘naked’. As interpreters verify the automated transcription, they need to see the text with a new set of eyes altogether. This ensures the multilingual nature of the project and contributes to SCIC establishing itself as a key player in the field of speech activities within the EC.Īfter the initial pilot phase, the number of interpreters working on the project has been gradually expanded and the project is now at cruising speed. Any errors are corrected and the revised transcription is fed back into the model to improve its accuracy, and in this way the machine ‘learns’ and gets better.Ĭolleagues from the 23 booths participated in the project with their linguistic expertise: verifying and validating automated transcriptions in their respective languages produced by Microsoft Azure. SCIC uses Microsoft Azure – the current cloud solution in the Commission which provides a standard speech recognition service - to create transcriptions, which are subsequently assessed by volunteer interpreters. Since then a lot of progress has been made, thanks to the contribution of SCIC’s interpreters. To verify support, see Language and voice support for the Speech service.At the beginning of 2020, SCIC announced a pilot project - Speech to Text (S2T) - to develop a corporate speech recognition solution that leveraged the latest advancements in artificial intelligence and natural language processing. For more information, see Custom Speech and Speech-to-text REST API.Ĭustomization options vary by language or locale.

It can also be used to improve recognition based for the specific audio conditions of the application by providing audio data with reference transcriptions. The base model works very well in most speech recognition scenarios.Ī custom model can be used to augment the base model to improve recognition of domain-specific vocabulary specific to the application by providing text data to train the model. When you make a speech recognition request, the most recent base model for each supported language is used by default. The base model is pre-trained with dialects and phonetics representing a variety of common domains. Out of the box, speech recognition utilizes a Universal Language Model as a base model that is trained with Microsoft-owned data and reflects commonly used spoken language. For more information, see Speech service pricing. You can conserve resources if the custom speech model is only used for batch transcription. A custom speech model can be used for real-time speech-to-text, speech translation, and batch transcription.Ī hosted deployment endpoint isn't required to use Custom Speech with the Batch transcription API. With Custom Speech, you can evaluate and improve the accuracy of speech recognition for your applications and products. For Speech CLI help with batch transcriptions, run the following command: The Speech CLI supports both real-time and batch transcription.Speech-to-text REST API: To get started, see How to use batch transcription and Batch transcription samples (REST).Transcriptions, captions, or subtitles for pre-recorded audio.Use batch transcription for applications that need to transcribe audio in bulk such as: You can point to audio files with a shared access signature (SAS) URI and asynchronously receive transcription results. Batch transcriptionīatch transcription is used to transcribe a large amount of audio in storage. Real-time speech to text is available via the Speech SDK and the Speech CLI. Transcriptions, captions, or subtitles for live meetings.Use real-time speech-to-text for applications that need to transcribe audio in real-time such as: With real-time speech-to-text, the audio is transcribed as speech is recognized from a microphone or file. To compare pricing of real-time to batch transcription, see Speech service pricing.įor a full list of available speech-to-text languages, see Language and voice support.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed